Ranveer, Aamir didn't really criticise Modi. How fact-checkers spotted their deepfakes

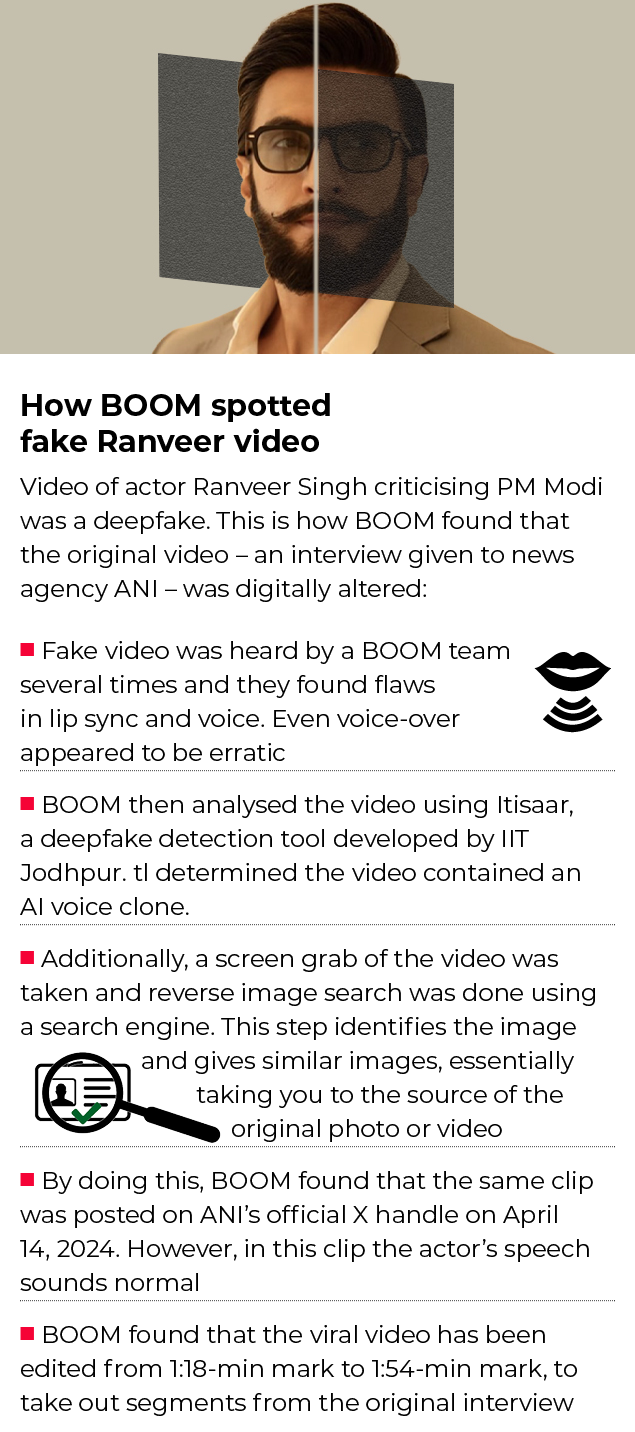

Last month, two separate videos surfaced online showing Bollywood stars Aamir Khan and Ranveer Singh criticising Prime Minister Narendra Modi and endorsing the opposition Congress party. Both the actors claimed that the videos were fake and were digitally altered. Police complaints have been filed in this connection.

In yet another recent video, Union home minister Amit Shah is heard announcing the curtailment of the reservation rights granted to the scheduled castes, scheduled tribes and the other backward classes. Referring to this video, PM Modi said at an election rally that political opponents were using artificial intelligence (AI) “to distort quotes of leaders like me, Amit Shah and JP Nadda to create social discord”.

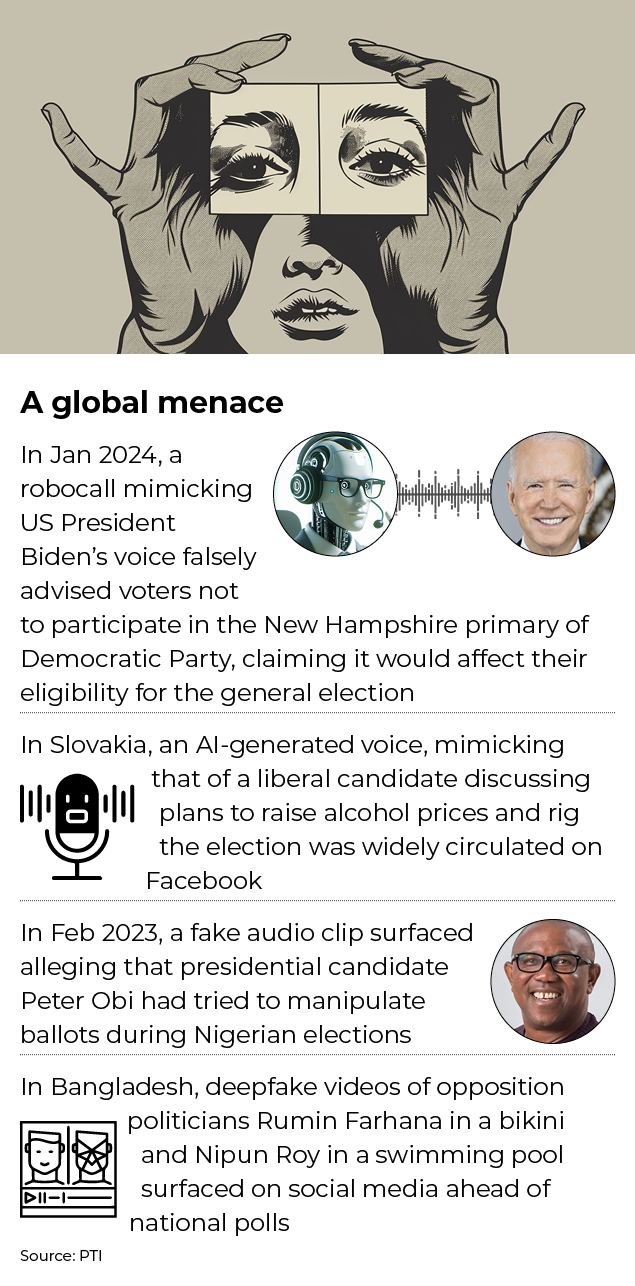

Election-related misinformation is not new to India, but the surge in AI-generated deepfakes in this poll season has triggered a major concern among journalists and fact-checkers.

The videos cited above were viewed more than half a million times on social media and the number of times they have been saved and shared cannot even be tracked.

The World Economic Forum’s ‘Global Risks Report 2024’ has flagged misinformation as the “greatest risk” for many poll-going countries including India.

Political parties in India have long focused on conservative means like door-to-door outreach, public rallies and posters to connect with voters before polls.

In 2019, social media platforms such as Facebook and WhatsApp were extensively used as campaign tools. This year, for the first time, the power of AI is being harnessed by the parties to reach out to the electorate.

Bharatiya Janata Party (BJP), for instance, is using AI to translate PM Modi’s speeches into eight different languages.

In 2022, the ministry of electronics and information technology launched ‘Bhashini’, an AI-driven translation app. Its efficacy was demonstrated during an event in December last year when Modi’s speech at the launch of Kashi Tamil Sangam in Varanasi was translated from Hindi into Tamil instantaneously.

Parties have also deployed AI-based personalised anchors to publicise election promises across social media platforms. For instance, the Communist Party of India (Marxist) has used its AI anchor ‘Samata’ in poll campaigns in West Bengal.

Dravida Munnetra Kazhagam (DMK) leader and former Tamil Nadu CM M Karunanidhi, who passed away in 2018, was virtually resurrected for the party’s events. The AI avatar of the late DMK leader often felicitates party workers and praises the leadership of his son MK Stalin, the current CM of the southern state.

Amid all this, there is also a surge in the misuse of AI to influence public opinion. TOI+ spoke to journalists and fact-checkers about the challenges posed by AI-based deepfake videos and how to deal with the menace.

Ranveer, Aamir didn’t really criticise Modi. How fact-checkers spotted their deepfakes

A busy season for fact-checkers

Of late, India has seen an exponential rise in election-related fake news.

“On an average, we do four fact-checks per day but at the moment, we are touching 10 a day,” says Karen Rebelo, deputy editor at BOOM, one of India’s leading fact-checking organisations.

According to Rebelo, the trend of fake videos and images was also seen in the 2019 general election and some state elections later.

“But the notable difference in this [Lok Sabha] election is the use of influencers or ‘diffuse actors’ who are not affiliated to the party but they believe in the ideological agenda that the party or the leaders espouse,” she says, adding such elements are creating and disseminating fake videos.

Syed Nazakat, founder and chief executive officer (CEO) of DataLEADS, another fact-checking organisation, says that the tide of online misinformation has only increased posing an unprecedented threat to elections and democracy.

Nazakat is part of a project called Shakti, a consortium of news publishers and fact-checkers in India, which works to ensure early detection of online misinformation, including deepfakes. The project is supported by Google News Initiative.

Insights from project Shakti show that roughly 70% of the fact-checked stories are visual content (images or videos), 28% are text-based content and around 2% are deepfake election-related misinformation, says Nazakat.

“The insights gathered from the Shakti collective strongly indicate that combating online misinformation will remain a significant challenge throughout the rest of the election period,” he adds.

Ruby Dhingra, managing editor at Newschecker.in, explains how fake news circulates in election times. “During the elections, we will see fake news getting circulated in a predictable format like – around claims by a party or an individual, speeches by politicians, polling related news and then exit polls,” says Dhingra.

This is the original video posted by ANI on April 14:

And this is the fake:

Detecting fakes – a big challenge

For the past few years, India has been dealing with the problem of “cheapfakes”, which were easy to debunk as the manipulation was haphazardly done. Now, the fakes are getting “better” due to the availability of advanced tools.

These days, even amateurs can make deepfakes using free tools available online. The major challenge for fact-checkers is to ensure how such videos or photos can be flagged, identified and put into context.

During the 2023 wrestlers’ protest, a morphed photo of the grapplers Vinesh Phogat and her sister Sangeeta emerged. The original photo of the two sisters sitting in a bus after being detained by police was manipulated. Their sombre faces were digitally altered using a face app, showing them smiling.

“This is one of the notable examples of AI-generated fakes,” says Rebelo. Earlier, some degree of coding experience or a tech-savvy temperament was required to create such visuals.

“Now, anyone with a laptop and an internet connection is able to create a fake that can lead to mass damage,” she adds.

Highlighting the challenges faced by fact-checkers, Rebelo says, “The literacy rate of AI-based manipulation is very low. So, fact-checkers don’t even reach the audience at the receiving end of the fake news.”

Dhingra explains how one can anticipate any misinformation before it gets circulated.

“There is something called debunking, in which you look at something that has already gone viral and analyse it to prove that it is fake. And there is pre-bunking in which you can anticipate the misinformation that is bound to happen on a topic and keep material ready.

“For instance, every time a new Covid-19 variant was detected, explainers were put out to inform the readers that there is only so much known about the strain at the moment; anything else is not known yet. Misinformation travels faster when there is an information vacuum,” she says.

A tech-tonic shift

Technology used to create fakes is growing at a rapid pace, which allows disseminators to undertake convincing and sophisticated manipulations, including improvements in generative models, audio deepfakes, and face re-enactments that enable creators to manipulate facial expressions and movements in real-time, allowing for more dynamic and expressive deepfake videos.

“Most of the tools used to detect and debunk fake news are developed in the West and they are not yet completely reliable when it comes to Indian languages or even English spoken in deep Indian accents. So, now a lot of the work that goes into it is manual,” says Dhingra.

While existing tools could help fact-checkers to look for the source of the original video and see which parts were manipulated, AI-generated synthetic videos will make it harder to detect such alterations as there won’t be any original video to investigate, says Rebelo.

Narratives that deepfakes build

According to Rebelo, the political class is the biggest disseminator of fake news. The term IT cell was coined with a specific agenda, to target a rival or create a distraction.

“Those who have tasted blood in one election know how effective these tools are in peddling a narrative,” she adds.

Nazakat says, deepfakes are employed to build certain narratives for political manipulation and character assassination. They can also be used to commit financial frauds.

“With deepfakes/AI-generated content, they [misinformation peddlers] are trying to change political discourse and influence people’s opinion. For example, the recent video of politician Kamal Nath, which was an old video overlaid with a deepfake audio,” he says.

In the fake video, the Congress leader and former chief minister of Madhya Pradesh can be heard promising a mosque and the reinstatement of Article 370 to Muslims in a meeting. The audio in the clip, claiming to be that of Kamal Nath, is a voice clone and not his own voice, according to fact-checkers.

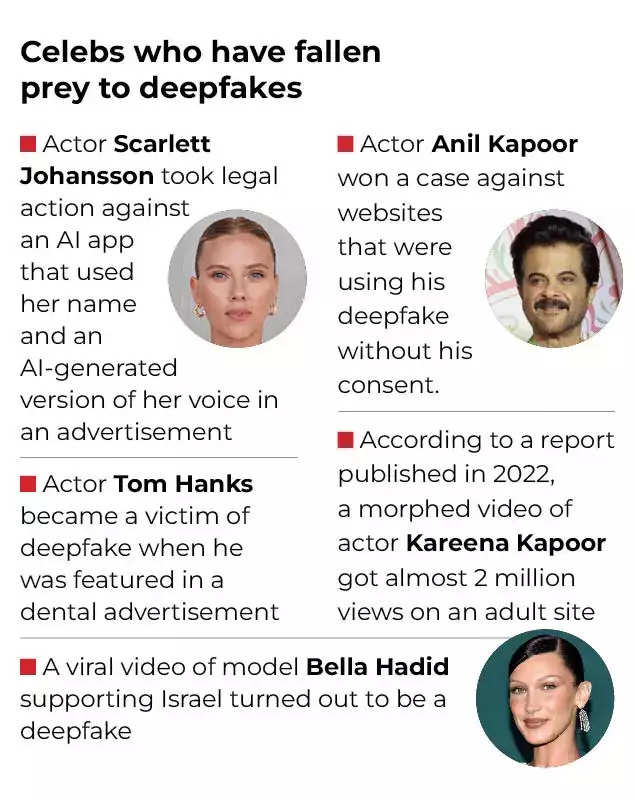

Not just politicians, deepfakes are sometimes employed to tarnish the reputation or credibility of individuals, including public figures, celebrities, and ordinary citizens as well.

The deepfake video of actor Rashmika Mandanna that surfaced last year prompted the Centre to issue an advisory to social media platforms, asking them to identify and remove such content within 36 hours after the matter is reported.

More on what the law says on deepfakes here

Lead illustration: Sajeev Kumarapuram